Table of Contents

The next wave of self-serve SaaS experiences will focus on operationalizing trust successfully.

When we build the free trial motion, we need to offer safe sandboxes, progressive feature unlocks, and clear guardrails that showcase to customers the AI's boundaries.

For end users to understand that the AI is responsible, safe, and reliable, the trial experience needs to include visible bumpers and frictions. This allows the end user to see where to refine, personalize, set up, and build confidence.

We measure trust through metrics like trust resolution rate and edit distance—how often does the AI perform successfully, and how often does the end user need to edit what the AI generated? Using these two activation metrics will define what a successful free trial experience looks like.

By building on something that the end user can trust, the AI can ultimately be deployed at scale, making the trial experience a successful conversion.

How do we close the AI trust gap without slowing adoption?

In classic PLG SaaS, during the trial experience, we optimize for Time-to-First value as activation. This is the time between sign-up and the first moment when the product delivers something clearly useful and valuable. That might be creating a document, a notification firing, a successful workflow running, or a dashboard loading real data.

It's very straightforward… the faster the first value appears, the more likely the user will stick around. They see the WOW moment. But with AI agents, the first value moment isn't just producing any response… It's about whether that response is good and trustworthy.

That is where Time-to-Trust comes in. It is the time when the first useful output is shown, and the user feels more comfortable. “What does the next useful output look like? Is it going to be consistent moving forward?”

This time-to-first trust is the moment when the user understands, "Hey, it can run autonomously without me." Building on this trust is what drives adoption and conversion in a trial experience.

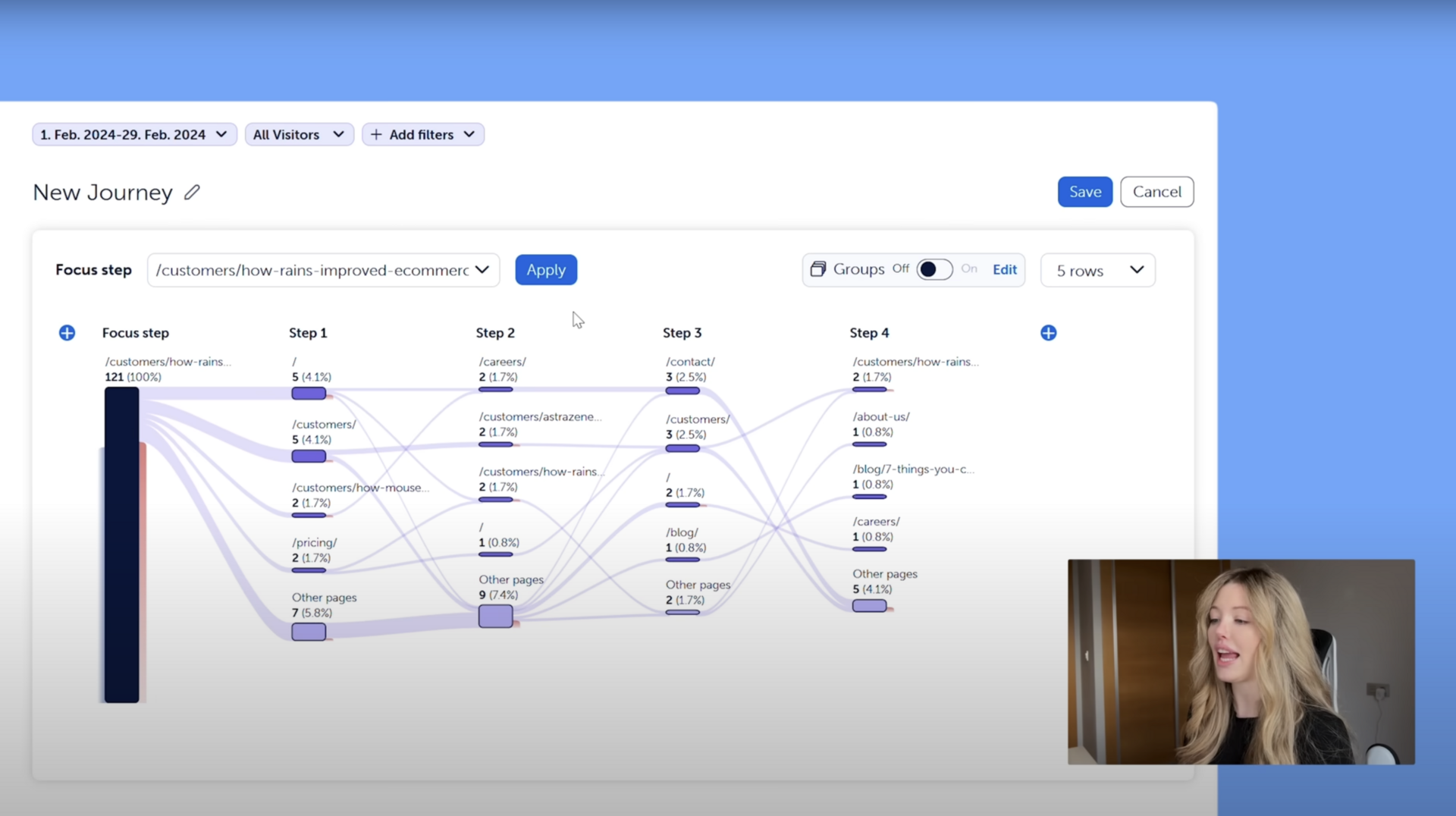

Below, I design the trial as a linear journey of activation milestones: a first successful AI resolution, an active copilot loop, and meeting the trust threshold where behavior is consistent. The activation milestones are mapped to get the end-user from stranger, to believer, to advocate. Each step has a specific purpose and metric instead of one generic "activation" event.

AI SaaS Activation Metrics to Track

Setup Activation Rate - % of users who personalize and upload data, and have completed multiple of these commitments

Time to the First Successful AI Resolution - a satisfactory AI output

Edit Distance - how often humans are rewriting and editing the AI response

Escalation Rate - routing to Human in the loop

Trust Resolution Rate (TRR) - the percentage of AI-generated responses that go out unchanged.

What must be true before we automate—and how do we set this up step-by-step?

The setup moment for an AI agent means connecting to help center documents, resolving support tickets, and capturing key policies like the company brand voice and tone, technical issues and rules, legal sensitivities, and the escalation path. Setting up the cold starts so that the AI can behave correctly downstream.

It's really a "garbage in, garbage out" challenge.

These documents, the help center, and the knowledge base need to be as up-to-date as possible so that no answers are incorrect. In the setup onboarding experience, we need to add friction to let the end user know there are certain boundaries and guardrails.

In the onboarding wizard process, guide the end user to upload one high-quality use case document at a time, whether it's for forgot password, scheduling refunds, etc., and let the end user test the AI to see if it's actually performing against those documents. The setup should involve taking one core action to personalize, upload, and test immediately so that you're building small, many commitments and much trust as you ramp the user up to their time to trust activation moment.

The success metric will be the Setup Activation Rate, % of users who personalize and upload data, and have completed multiple of these commitments.

How do we create experiences that make customers believe the AI understands them?

The first activation milestone is the Time to the First Successful AI Resolution. This should happen in a safe sandbox using historical tickets and data, not through live traffic.

The end user picks and compares already resolved tickets against what the AI will answer, confirming that the AI is guided through the documentation. Then, they can see that AI can handle real-life examples using the same language and rules that were set up.

This is the first big trust and commitment moment, showing what the AI can do with the next 50 tickets. It turns the user from a stranger to AI into someone who is curious and becoming a cautious believer in the future.

Compared to the previous PLG SaaS guided tour onboarding, this is more of a "guided proof" onboarding. The end user gets an immediate "aha" moment in a safe sandbox environment, using their data without any risk and doing it in a conservative way.

Upload documents → Test → Review → Upload again → Improve → Test → Re-test.

It’s a cycle of validation and trust building.

How do we progress from low-risk to higher-risk AI safely?

After the end user sees that the AI is behaving consistently on past tickets, the next activation milestone is to deploy it into a live workflow, not as an autopilot but as a co-pilot. In this co-pilot environment, the AI drafts responses, and the human agent reviews, edits, and sends them.

An example UX design would be a ticket comes in, the AI creates the response, and the human sees that response draft, tweaks it, rates it, and provides feedback for future suggestions. This is called the Edit Distance. We are measuring how often humans are rewriting and editing the AI draft. Humans might change upwards of 50 to 70% of the text, but as the AI learns from edits, rules, and documentation updates, this edit distance will shrink. The lower the edit distance, the better we will see commitment and trust from the end user in the AI.

Another metric to track is the Escalation Rate. How often does the AI recognize that it doesn't know the answer and has to hand the decision back to a human agent? This is how we ensure the human-in-the-loop process is built into this safety environment. While the AI cannot do it all, it needs guidance and shaping to make sure the system is behaving properly.

Previously, adding intentional friction during onboarding increased our Day 30 retention to 67%.

We started building our AI product with minimal onboarding. The “AI just works.” But it led to more confusion and why the AI behave that way. When the AI created a poor output, the user churned.

We added guardrails during onboarding. Making sure users understand they can refine the AI, and the AI is actively improving. Gave users better trust with how our AI system operates. Turning frustration into patience.

This level of back and forth, building on consistency, turns cautious believers into fully trusting the tasks that are now handed over. We have a new level of trust and certainty.

What happens when the AI model is uncertain, and how do we measure trust?

After deploying as a co-pilot, we can measure if the AI is indeed helpful for users, and we can observe whether trust is building for the admin in the AI. The AI is becoming comfortable and consistently handling a high volume of tickets. This is measured by a metric called the Trust Resolution Rate (TRR), the percentage of AI-generated responses that go out unchanged.

High TRR, Low Escalation Rate

As the Trust Resolution Rate climbs and stabilizes, the administrator shifts from checking everything to relying on the AI most of the time. If the Trust Resolution Rate is high but the escalation rate drops to zero, the AI might be overconfident. This signals the administrator to review the tickets, as a healthy balance between the escalation rate and the Trust Resolution Rate is important.

Low TRR, Stagnant Edit Distance

If the Trust Resolution Rate is low and the edit distance is not improving, then the trial has not delivered consistent value. AI answers are not improving, the AI is not learning to resolve the tickets, and they require more editing to ensure accuracy. Over time, the edit distance needs to shrink.

High TRR, Low Edit Distance, Steady or Low Escalation Rate

An ideal combination would be a high Trust Resolution Rate, upwards of 60-70%, a decreasing edit distance, less editing needed, and a reasonable escalation rate around 10-20% to showcase system consistency and performance. Continue to enforce a boundary by having a human in the loop for escalation. The AI should handle use cases in low-risk categories like password resets and status updates, where it is confident.

This shows that the trial has successfully created a scalable system. The administrator has built trust in how the system behaves, with the ability to edit, refine, and take over if necessary.

Next Wave of AI PLG Trials are Building Trust and Converting to Paid

The next decade of SMB self-serve growth will be won by the company that successfully operationalizes trust.

When we build free AI trials using safe sandboxes, progressive capability unlocks, and transparent confidence guardrails, we are designing how. We are adding intentional friction that builds reassurance and confidence to AI pilots. By measuring trust through metrics like Trust Resolution Rate and Edit Distance, we are aligning product value signals to revenue.

Hitting these activation and trust milestones is when a free trial successfully converts to paid.

Read more:

I write weekly about my learning on launching and leading PLG. Feel free to subscribe.

I am Gary Yau Chan. 3x Head of Growth. 2x Founder. Product Led Growth specialist. 26x hackathon winner. I write about #PLG and #BuildInPublic. Please follow me on LinkedIn, or read about what you can hire me for on my Notion page.

![PLG vs SLG: How to Choose? [Framework]](https://media.beehiiv.com/cdn-cgi/image/format=auto,fit=scale-down,onerror=redirect/uploads/asset/file/add0e243-a663-4f5b-81a5-0554cd609c17/image.png)

![LTV CAC Ratio Payback Formula [Free Download Template]](https://media.beehiiv.com/cdn-cgi/image/format=auto,fit=scale-down,onerror=redirect/uploads/asset/file/44a1862d-a0e4-478c-b87f-038cdd79daef/Screenshot_2024-09-18_at_3.44.16_PM.png)