Table of Contents

Rebuilding PLG Activation for AI Outcome-based SaaS

AI is changing classic SaaS economics.

Intercom’s Fin AI Agent became a nine‑figure ARR product by charging per “Resolution,” not per seat. That shift is becoming common in SaaS because AI model costs scale with usage.

But most teams are only changing pricing, not activation.

They swap “per seat” for “per resolution” and keep the same old PLG motions. A free trial, a feature tour, and an invite-your-team prompt.

Seat-based PLG optimizes for “a user took an action,” while outcome-based PLG must prove “the AI did the job correctly, every time.” Until self-serve onboarding is rebuilt around that outcome, the new pricing change won’t make sense to the customer.

How is SaaS Seat-based vs. Outcome-based Activation different?

In seat-based SaaS, activation is personal and fast. A user signs up, clicks around, creates something, and invites a teammate. The aha moment is the single player mode, "I can see how this helps me", and it takes minutes to create something (a doc, project, dashboard). They invite teammates, then add more seats and switch to multiplayer mode.

Read more on Seat-based Pricing here.

With outcome-based AI products like Intercom Fin, the buyer is usually an admin, and their question changes to “Will this work reliably with my content and use cases? How often will it fail?” Their risk is that it’s bad AI in front of customers, a bill that charges, and they didn't get the value (or the outcome they were expecting).

Aspect | Seat-Based | Outcome-Based (Fin-style) |

Aha moment | User action completed | Verified AI outcome delivered |

Primary buyer | End-user (personal value) | Admin (workflow ROI) |

Setup required | Minutes (create, invite, explore) | Hours (knowledge, routing, guardrails) |

Pricing risk | Fixed, predictable | Variable, tied to AI performance |

Trust driver | Familiar UX, peer adoption | Accuracy ratings, visible fix loops |

Failure mode | Low engagement → quiet churn | One wrong answer → permanent distrust |

Seat-based PLG is about helping people do more. Outcome-based PLG is about getting systems do work autonomously. Also proving that those systems are safe, accurate, and worth paying for.

In outcome-based AI, a bad experience, especially one thats billed for, can forever damage trust in the product, company brand, and the pricing model at the same time.

Self-serve onboarding for an outcome-based product has no margin for error.

That means the self-serve journey must be designed for an admin configuring a workflow, not an end-user exploring features.

How to Build Trust in AI SaaS Products?

If seat-based PLG is about unlocking features, outcome-based PLG is about unlocking trust.

An admin doesn't sign up for an AI tool and immediately trust it to talk to their customers. They start as a skeptic. Your onboarding has to guide them through a progressive feedback loop (uploading, checking, testing, and refining) that deliberately moves them from stranger to advocate.

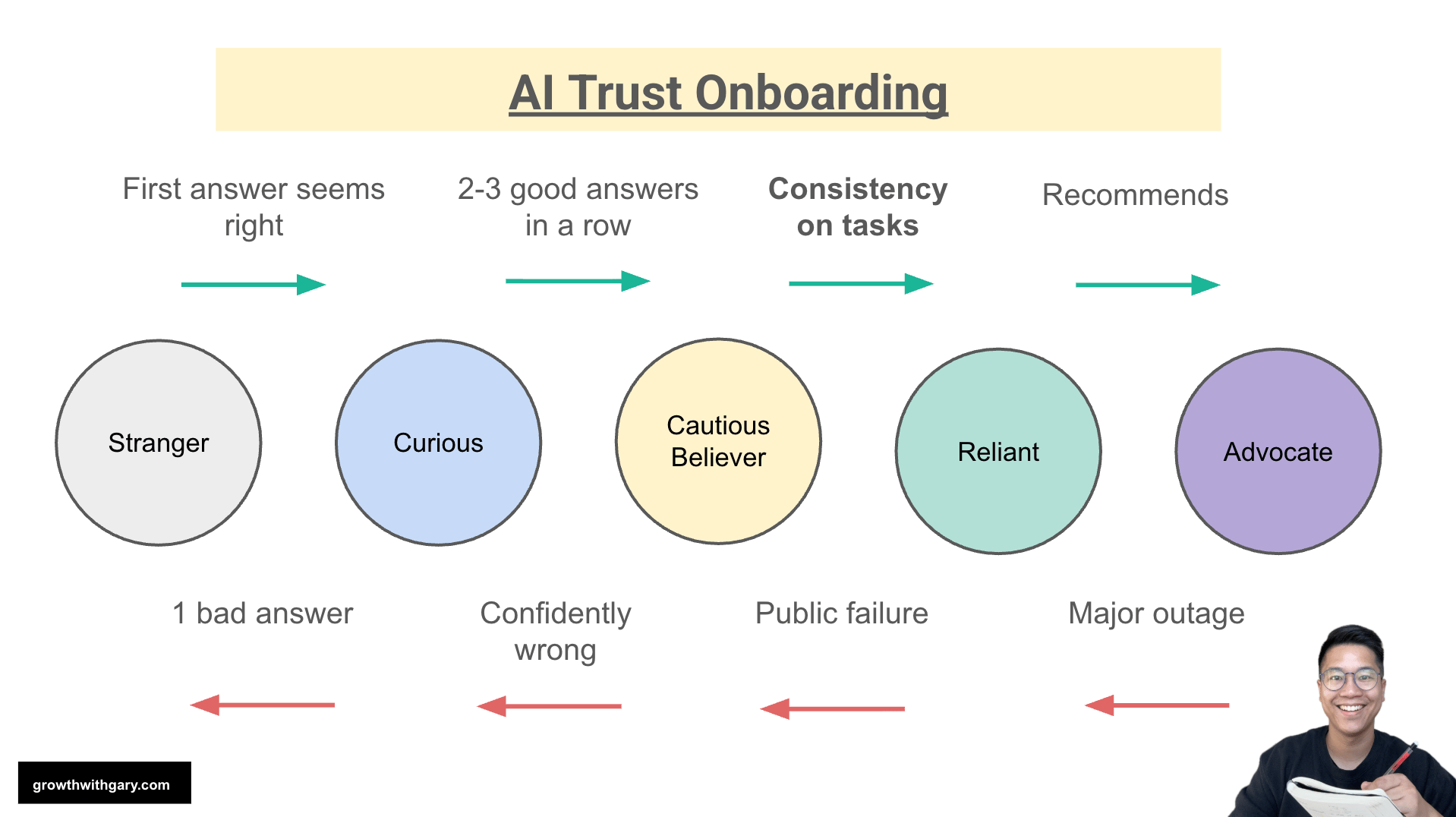

Look at the psychological journey:

Stranger → Curious: Connect one knowledge source and see the AI generate a decent answer from their own docs. (The first "wow" moment).

Curious → Cautious Believer: Use the test console to ask 3-4 real backlog questions, and the AI gets them right.

Cautious Believer → Reliant: Deploy it on a low-risk channel with escalation handoffs. The AI consistently handles basic tasks without human help.

Reliant → Advocate: They see a high resolution rate on their dashboard and start recommending the tool to others.

But notice the bottom arrows. Trust is fragile.

If you push an admin to go live before they are a "Cautious Believer," one bad answer drops them back to square one. A confidently wrong answer in front of a real customer causes a public failure, destroying trust completely.

This is why you can't just drop an admin into a dashboard and say "Go." The onboarding experience must be structured to force this testing and feedback loop, building reassurance at every single step before moving to the next.

Why CAC Increases With Outcome-Based Pricing (And How PLG Fixes It)?

When companies move to outcome-based pricing without fixing activation, the CAC increases.

Without the sales playbook, sales reps revert to selling what they know, which has been proven with seat-based models. Now, they are selling something called “Resolution”, which could be a moving target, which is difficult to forecast.

As a result, the sales-led POC sales cycle, which previously had established playbooks and lasted 30 days, is now extended to 60 days. Without the proper playbook, deals get stalled as everyone negotiates and tries to figure out what a Resolution or Resolve ticket even means. Buyers need more education and want proof before committing.

Seat-based buyers easily understand, "I just buy seats. How many people on my team need to use this?" However, outcome-based buyers need verification: "Show me that this works." This leads to a very expensive, back-and-forth process. There's also uncertainty about LTV, so marketing spend remains inconclusive.

The big structural fix would be to build in self-serve activation, the PLG experience, by creating a free trial or POC that lets customers connect to their own knowledge base and content, test the AI, and validate real resolution before signing or buying. Because LTV can be uncertain, SaaS companies will hesitate to spend thousands of dollars on a sales-run proof of concept. PLG creates an opportunity for the product to demonstrate its value. The PLG experience becomes a requirement and makes the outcome-based pricing model operate more smoothly from an operational standpoint.

How Intercom Fin designed Outcome-based Activation?

How to design PLG onboarding for an outcome-based support AI like Intercom Fin?

What exactly happens in the first 1 minute, 10 minutes, 1 hour, 24 hours, and 7 days that gets an admin from “curious” to “confident,” and ready to pay for resolutions?

First 1 Minute: The Promise Is Crystal Clear

The admin lands on a signup page that doesn’t just sell “AI support.” It makes a very specific promise:

“Fin AI Agent: $0.99 per resolution—pay only for resolved customer conversations.” Source: Fin Pricing

The moment they create an account, they see progress towards the Fin framework.

Content → Test → Deploy → Analyze (Intercom calls this the Fin Flywheel)

Get access to a usage/resolutions indicator.

A clear starting action, “connect your knowledge source and let Fin learn from it.”

The most important activation psych is when admin understands the setup, the cost, and the action to personalize.

First 10 Minutes: Setting the Setup Moment with Connect Knowledge, Show Real Intelligence

The highest leverage activation action for an outcome-based AI agent is connecting a real knowledge source (ex, paste a help center URL, upload a PDF or policy doc, or connect an existing knowledge base).

Having the AI answer a sample of real questions using the customer’s own content is the WOW moment.

Don’t rely on sandbox data or examples. They can show how it works, but focus the user on setting up personalization and build trust on how the AI works for them, not with an example.

Seeing their content used intelligently is a deeper aha than any canned demo.

Upload something, anything!

We encourage our customer to upload their current or past security questionnaires into our AI. We want them to see that our AI actually works and solve their questionnaires, rather than just using sandbox data.

By showing that compliance is built from their own company information, they go from being a skeptic to a cautious believer.

First 1 Hour: Build Trust Through Testing and Control

By the end of the first hour, admins should feel two things, “This AI is actually pretty good with my content,“ or “If it goes wrong, I know how to control and correct it.”

By constantly testing it with real and recent customer questions, the admin can rate the answers and see why the AI responded in a certain way. They can then add specific guidance regarding tone, business policies, rules, and escalation triggers for handoff to a human.

To the admin, if the answer is wrong, they should know:

"I can refine it at Guidance.”

“I can edit the content it is fetching from in Training.”

"I can just hit Escalation for these situations.”

The first hour is letting the admin know they can refine and control. That gives the admin a sense of relief about how to fix issues and build trust with the platform.

Refine with Fin by asking clarifying questions.

“Escape valve” for AI agent - Escalate to Human

AI is still a black box. End users just want to know, where did it get that information? Why did it behave the way it behaved?

By revealing the reasoning and citations, it establishes another level of trust and reassurance.

At Clarity Inbox, we create AI reasoning so that customers feel reassured. However, as more trust is built, users become confident that they no longer need to see the reasoning.

First 24 Hours: Ship the First Live Resolution

By the end of the day, the AI should be able to handle most customer questions in the testing environment.

This means that the admin can safely deploy the AI in a low-risk channel, such as a subset of pages, a beta landing page, or a blog post.

They are staying conservative because they have to continue to prove that AI is delivering and building confidence with internal stakeholders.

The goal of day one is to publish and have at least 5 resolutions through the system.

At this point, the credits meter moves. The admin has seen:

Which conversations counted as “resolved by AI.”

How ratings looked.

That there was a clear off-ramp to humans when needed.

Now the admin understands the outcome-based pricing model.

Conversations > Resolutions > Satisfaction rates

First 7 Days: Create a Resolution Learning Loop

The first week of activation should show that the AI is performing and improving with use. Activation should solidify the resolution dashboard, which displays the number of resolutions, the number of conversations, the resolution rate, satisfaction, and areas where the AI is struggling.

This allows the admin to make improvements around content, guidance, and escalation rules. Product-led onboarding should become easier and more discoverable, helping users understand how to fix these issues and move forward to the next set of engagement milestones.

These milestones include adding more knowledge, deploying to additional channels, increasing the exposure rate, and inviting teammates.

This closes the loop:

AI attempts resolutions.

Admins and users rate outcomes.

The system surfaces problem cases.

Admins fix content or rules.

Resolution rates improve, making every future credit feel more valuable.

At that point, credits start feeling like a lever, not a gamble.

How to apply Onboarding Bumpers and PQLs to Outcome-based SaaS?

Finding the right free credit limits for the buyer to train and think in terms of outcome before they commit to purchasing.

Previously, when I was selling AI-answered security questionnaires, we gave new customers a small batch of free AI credits. This allowed them to sample with pre-filled data or upload a real security questionnaire and have the AI draft a response.

Customers experienced a bigger WOW moment when it was fully customized to their situation. Uploading their own security questionnaires and seeing the AI-generated answers helped them realize that the outcome was solving a real job that previously took weeks.

While the model was very usage-based, it's actually outcome-based because the AI-generated answers must pass audits and unblock sales deals for compliance. If they don't, customers won't want to use more credits in the future. That's why activation really focuses on trust, personalization, and refinement, not just hit "generate", and get results like a slot machine.

When an admin skips testing and gets the wrong answer in a live conversation, then receives a bill, they don't think, "I should have tested more." They think the AI doesn't work, and you don't get a second chance with AI products.

Making sure activation happens, that the admin is connecting to the right content, testing before deploying, and building refinements will reduce downstream churn. To achieve this, we need to implement product onboarding bumpers. These can remind the admin to connect to their help center to see real answers, or prompt them after a real test, ready to go live on chat, and then review results.

For example, a notification could say, "Your resolution rate improved 12% this week. Add more content to keep improving." The key is making sure the admin is engaging with the results, making refinements, and not doubting the effectiveness of the AI.

Why Outcome-based PLG Wins at Scale?

Outcome-based PLG shows that as you're building trust and refining the knowledge base content, the AI improves and achieves more resolutions.

Every activation step serves as a reminder to test, refine, set guardrails, deploy conservatively, and gradually establish the workflow.

Outcome-based is not just a pricing change but a complete redesign of activation from the first minute to the first seven days.

Build trust with the customer so they feel reassured, can use the AI safely, and know that what they are being charged for in their billing is fair and understood.

I write weekly about my learning on launching and leading PLG. Feel free to subscribe.

I am Gary Yau Chan. 3x Head of Growth. 2x Founder. Product Led Growth specialist. 26x hackathon winner. I write about #PLG and #BuildInPublic. Please follow me on LinkedIn, or read about what you can hire me for on my Notion page.

![PLG vs SLG: How to Choose? [Framework]](https://media.beehiiv.com/cdn-cgi/image/format=auto,fit=scale-down,onerror=redirect/uploads/asset/file/add0e243-a663-4f5b-81a5-0554cd609c17/image.png)

![LTV CAC Ratio Payback Formula [Free Download Template]](https://media.beehiiv.com/cdn-cgi/image/format=auto,fit=scale-down,onerror=redirect/uploads/asset/file/44a1862d-a0e4-478c-b87f-038cdd79daef/Screenshot_2024-09-18_at_3.44.16_PM.png)